Hola! I’m Oscar Mañas. I’m a Research Scientist at Meta Superintelligence Labs on the Media Generation team in Zurich. I recently completed my PhD at Mila and Université de Montréal, advised by Prof. Aishwarya Agrawal.

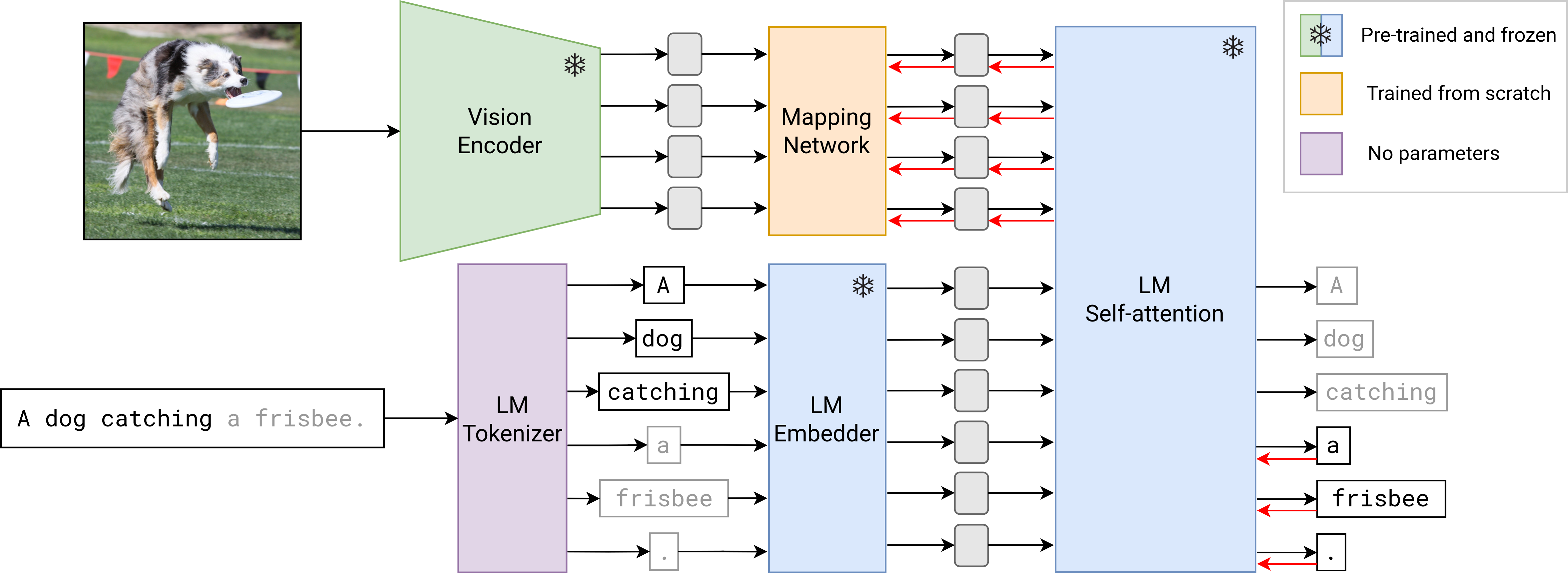

My research explores the intersection of vision and language, with a focus on multimodal vision-language generative models: systems capable of generating images, videos and text from multimodal inputs. I’m especially interested in building models that reason fluidly across modalities, treating them as complementary channels of perception and thought.

Previously, I was a Visiting Researcher at Meta FAIR and a Research Intern at Element AI. See my CV for more details.

News

- Feb 2026 PhD thesis published: Towards efficient, reliable and measurable vision-language systems

- Dec 2025 Spotlight talk at the Deep Learning Barcelona Symposium (recording)

- Nov 2025 Defended my PhD thesis and graduated from Mila / Université de Montréal

- Oct 2025 Started as Research Scientist at Meta Superintelligence Labs, Media Generation team, Zurich

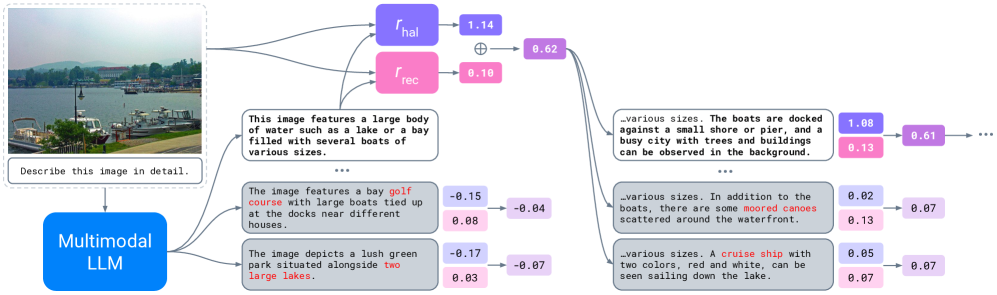

- Jun 2025 Paper accepted at ICCV 2025: Controlling Multimodal LLMs via Reward-guided Decoding

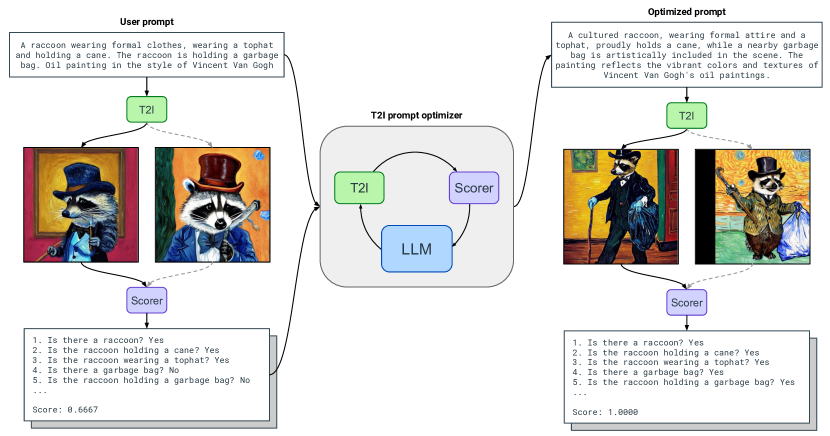

- Jun 2024 Paper accepted at TMLR: Improving Text-to-Image Consistency via Automatic Prompt Optimization

- Jan 2024 Started as Visiting Researcher at Meta FAIR, Montreal

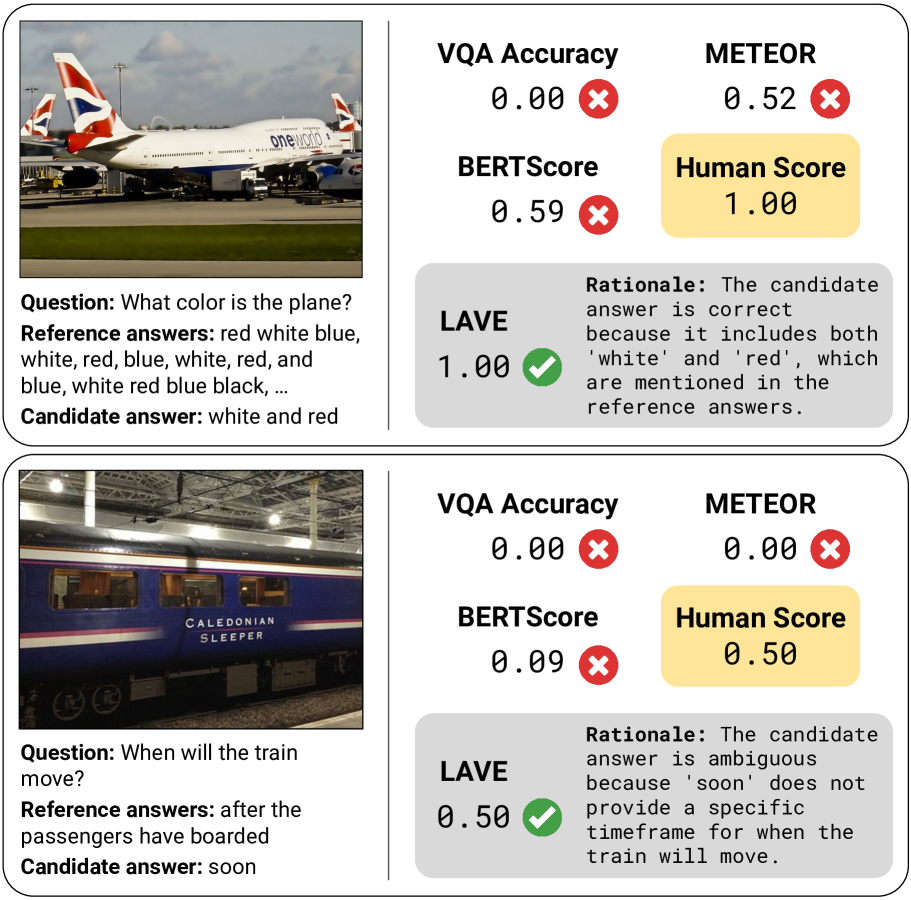

- Dec 2023 Paper accepted at AAAI 2024: Improving Automatic VQA Evaluation Using Large Language Models

- Jun 2023 Started as Research Scientist Intern at Meta FAIR, Montreal

Selected Publications

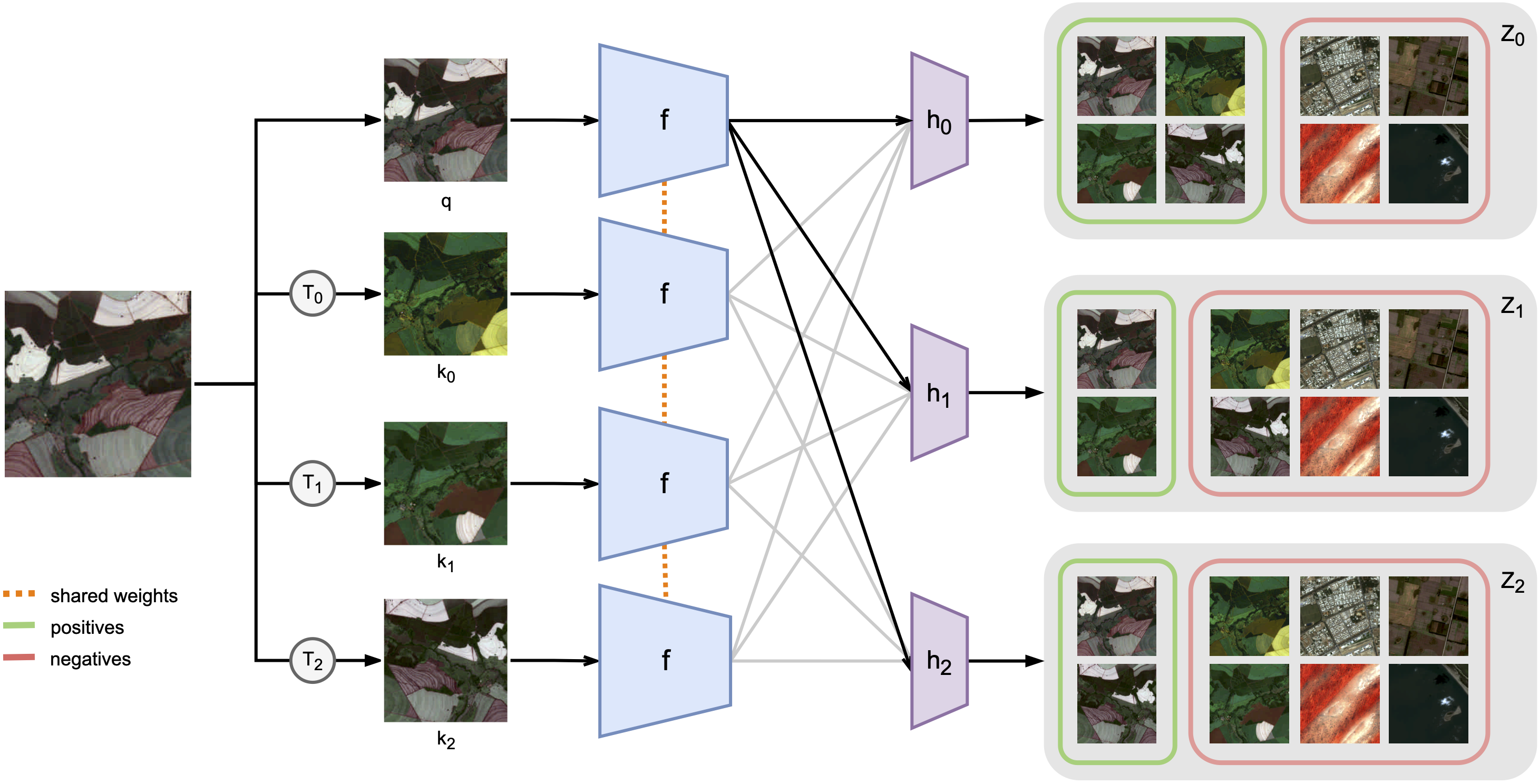

Seasonal Contrast: Unsupervised Pre-Training from Uncurated Remote Sensing Data

ICCV 2021

first author paper

ICCV 2021

first author paper